Case Study · Product Design · AI Experiences

Designing trustworthy AI experiences for project work

Explored interaction models for emerging AI capabilities within real project constraints by translating complex AI behaviors, prompt generation, and system rules into intuitive workflows that support trust, security, clarity, and confident decision-making.

→ Designed interaction models for AI-assisted project workflows

→ Balanced usability with trust, security, and adoption constraints

→ Turned emerging AI capabilities into actionable product direction

Why this mattered

Making AI useful is a design problem, not just a technical one

As AI capabilities became more viable for project-based work, the challenge was no longer whether AI could be used, it was how to integrate it responsibly into real workflows.

Users needed experiences that felt useful without being opaque, powerful without being risky, and intelligent without disrupting how work already got done.

The opportunity was to design AI interactions that supported real project outcomes while helping users understand what the system was doing, why it was doing it, and when to trust it.

My role

Translating emerging AI capability into usable product direction

As Senior Product Designer, I explored how AI could fit into project workflows in a way that felt intuitive, secure, and operationally realistic. My role focused on shaping the interaction model, prompt experience, and trust boundaries around how AI entered the product.

Focus areas

→ Defining interaction models for AI-assisted work

→ Shaping workflows for prompt generation and AI outputs

→ Creating structures that supported trust and clarity

→ Aligning experience decisions with technical and project constraints

The design challenge

The challenge wasn’t just AI output. It was user confidence.

The challenge was designing an experience that helped users understand what AI could help with, know how to ask for the right thing, evaluate outputs with confidence, and stay aware of security and trust boundaries.

Because AI introduces ambiguity by default, the experience needed to reduce uncertainty and not add to it.

Key product decisions

The design decisions that made AI feel usable

Intent-first workflows

Designed around what users were trying to accomplish, not generic model input/output behavior.

Impact: Made AI interactions feel more useful and goal-driven.

Prompt generation as product design

Supported prompt construction through guidance, structure, and flow design instead of assuming user expertise.

Impact: Reduced friction and improved confidence.

Trust through visibility and control

Designed for transparency, review, and human judgment instead of black-box automation.

Impact: Increased clarity and reduced perceived risk.

Transformation moment

A clearer path from intention to trustworthy AI output

Before

- AI possibilities felt abstract and unstructured

- Prompting behavior was unclear

- Users had to infer how and when AI should be used

- Trust and security concerns created hesitation

After

- AI interactions were grounded in specific workflow moments

- Prompt generation became part of the guided experience

- Users had clearer expectations around outputs and review

- The product direction balanced intelligence with control

→ A more trustworthy and usable path for bringing AI into project work

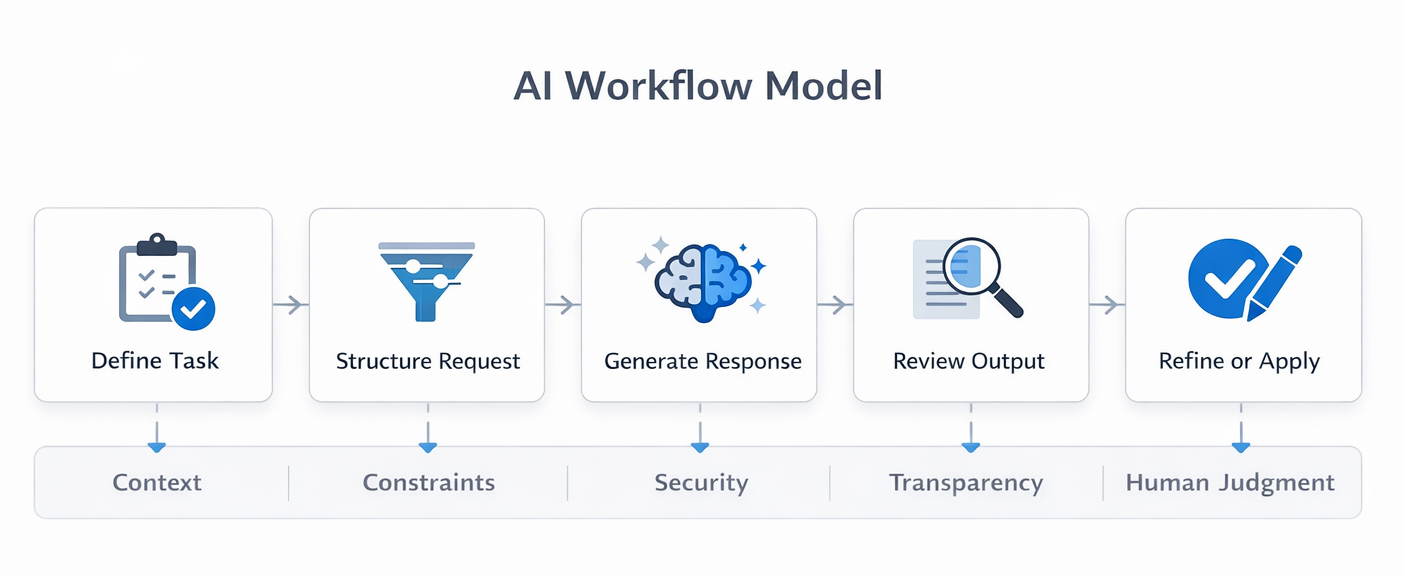

Designing the interaction model

Designing how AI behaves inside the workflow

Explored where AI should enter the workflow, how users should initiate it, what the system should reveal during generation, and how outputs should be reviewed or accepted.

The goal was to create an interaction model that felt assistive, not intrusive, and trustworthy rather than opaque.

Product experience

Bringing AI structure into the interface

Guided prompt construction

→ Helped users move from vague intent to structured request

→ Reduced reliance on prompting expertise

Output review and refinement

→ Treated AI output as something to assess, refine, and integrate

→ Increased trust and reduced over-reliance

Designing AI meant designing trust, not just interaction

The product experience needed to answer what the AI was doing, what information it used, when human judgment mattered, and where system boundaries existed.

Systems thinking

Designing not just a screen, but a system of relationships

This work required connecting user intent, workflow context, prompt logic, AI generation, trust signals, review actions, and organizational constraints into one coherent experience.

What made this work valuable

→ It translated emerging AI possibilities into clear interaction models

→ It made prompt generation more approachable and usable

→ It aligned trust, security, and product experience

→ It created a realistic path for adoption within constrained environments

Impact

Impact

Translated emerging AI capabilities into usable product direction

Made prompt generation more approachable and structured

Strengthened alignment between trust, security, and experience design

Created a stronger foundation for AI adoption in project workflows

What I’d do next

Where this could go from here

I’d focus on adaptive prompt assistance based on user context, clearer trust signals around output quality, human-in-the-loop refinement patterns, and reusable AI interaction patterns across project workflows.